20 Million Reports: The Online Child Exploitation Crisis

The safety of children online has become one of the most pressing challenges of our digital age. In 2024, the CyberTipline received 20.5 million reports of suspected child sexual exploitation—more than 56,000 reports every single day. Early 2025 data reveals the crisis is accelerating.

Reports involving generative AI exploded by 6,344% year-over-year.This explosive growth underscores the critical need for increased awareness among parents, educators, and communities—as well as stronger action from technology platforms, law enforcement, and policymakers.

_____________________

About the CyberTipline

The CyberTipline, operated by the National Center for Missing & Exploited Children (NCMEC), serves as the primary reporting system in the United States for child sexual abuse and exploitation. It functions as a centralized hotline where parents, the public, and online service providers can report suspected cases of child sexual exploitation, including child sexual abuse material (often called child pornography), online enticement, trafficking, and other abusive content.

Federal law requires internet providers, social media platforms, cloud companies, and other online platforms to report suspected cases of child sexual exploitation to the CyberTipline. Once reports are received, NCMEC analyzes them and forwards actionable information to law enforcement agencies, including Internet Crimes Against Children (ICAC) task forces, helping to rescue victims and stop perpetrators.

_____________________

The Scale of the Problem

The numbers are staggering. In 2024 alone, the CyberTipline received 20.5 million reports of suspected child sexual exploitation, accompanied by 62.9 million related images, videos, and files.

These figures represent only reported cases, meaning they likely understate the true magnitude of the issue. An overwhelming 84% of reports link to or resolve to locations outside the United States, underscoring that online child exploitation is a borderless crime requiring international cooperation and coordinated response.

_____________________

The Surge in Online Exploitation

The data reveals dramatic increases across multiple forms of child exploitation. Online enticement—when adults communicate with children for the purposes of sexual exploitation or sextortion (the coercion of victims into providing sexual images or acts through threats or manipulation)—surged by 55% from 2023 to 2024. This signals that predators are becoming increasingly aggressive and sophisticated in targeting young victims.

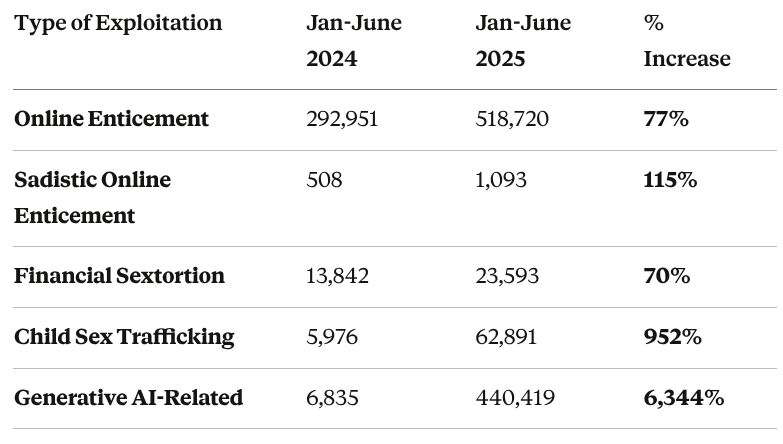

Comparing the first half of 2024 to the first half of 2025, the acceleration becomes even more apparent:

Source: National Center for Missing & Exploited Children

These increases represent not just statistics, but real children facing manipulation, coercion, and harm. The 77% increase in online enticement cases and the 952% surge in child sex trafficking reports in just one year suggest that predators are exploiting new technologies and methods to target vulnerable youth.

_____________________

The Generative AI Threat

Perhaps the most explosive trend is the emergence of generative AI as a tool for exploitation. Reports involving generative AI skyrocketed by 6,344% between the first half of 2024 and 2025—from 6,835 reports to 440,419 reports.

Generative AI can be misused to create synthetic child sexual abuse material, manipulate existing images, or facilitate more convincing impersonation and grooming tactics. The speed and scale of this increase suggests that as AI tools have become more accessible, bad actors have quickly adapted them for exploitation purposes.

_____________________

How Exploitation Happens

Exploitation typically follows a calculated, multi-stage process. Understanding how predators operate can help parents, educators, and young people recognize warning signs.

The Grooming Process

Initial Contact: Predators go where children spend time—gaming platforms like Roblox, Fortnite, and Minecraft, or social media apps like Instagram, Facebook, and TikTok. They pose as peers, potential romantic partners, or friendly strangers.

Trust-Building: Through conversations about games, hobbies, or school stress, predators build emotional connection over time, offering attention and validation.

The Migration (Critical Warning Sign): Predators frequently push to move conversations to private messaging apps with less monitoring—Discord, WhatsApp, Snapchat, or Telegram. They claim it's "easier to chat there." This is a major red flag.

Escalation: On private platforms, predators introduce sexual content or make inappropriate requests. Content often disappears, making detection difficult.

Common Tactics

Sextortion: Coercing victims into sharing sexual images, then threatening to distribute them unless the victim complies with further demands.

Financial Exploitation: Using money, gift cards, or fake job offers to lure children into creating exploitative content.

Live-Streaming Abuse: Paying facilitators in other countries to abuse children in real-time via video platforms.

AI-Manipulated Content: Using generative AI to create synthetic abuse material, manipulate innocent images, or impersonate trusted individuals.

The cross-platform nature of exploitation means monitoring one app provides only a partial picture. Predators deliberately exploit the fragmented nature of children's online lives, moving between platforms to avoid detection.

_____________________

Where Exploitation Happens: Platform Analysis

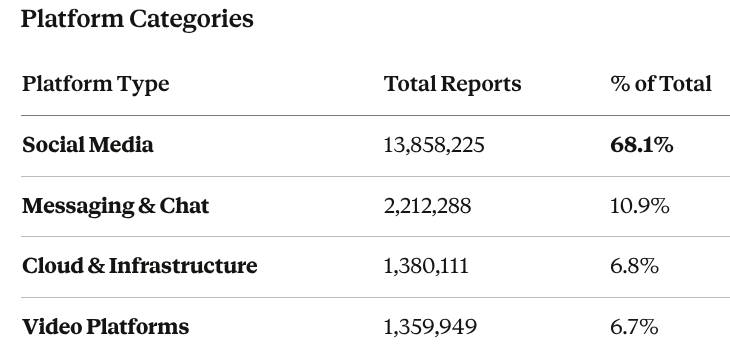

The data reveals that social media platforms account for the majority of reported exploitation:

Source: National Center for Missing & Exploited Children

More than two-thirds of all reports come from social media platforms, where children spend significant time connecting with peers, sharing content, and building online identities.

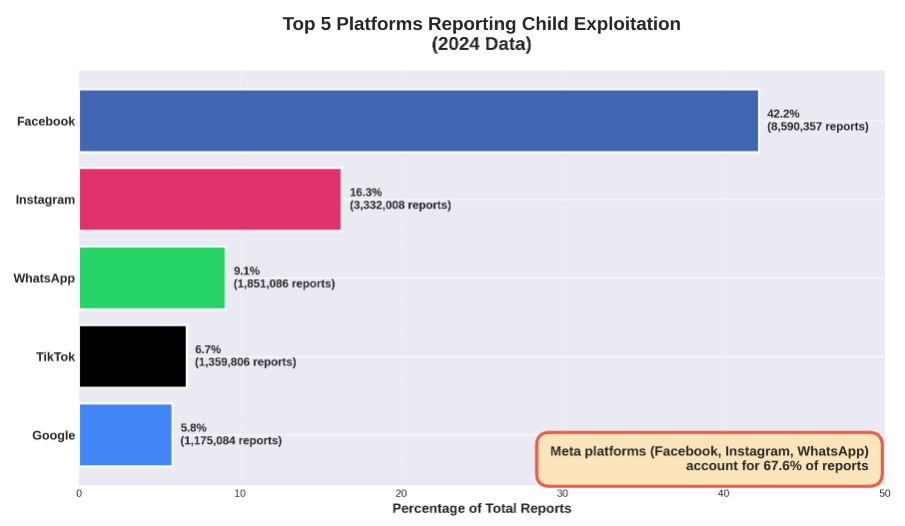

Source: National Center for Missing & Exploited Children

Meta's platforms (Facebook, Instagram, and WhatsApp) alone account for 67.6% of all reports. This concentration raises important questions about platform design, content moderation, and child safety features.

_____________________

The Global Landscape

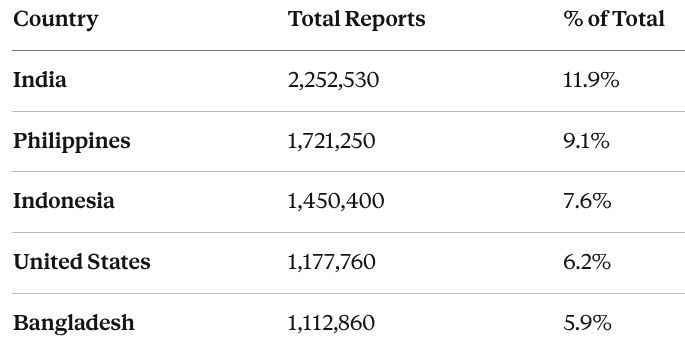

Online child exploitation is a worldwide crisis. While reports resolve to locations in more than 200 countries and territories, five countries account for the highest volumes:

Source: National Center for Missing & Exploited Children

These five countries alone account for over 40% of globally resolved reports. The high volume from South and Southeast Asia reflects rapid internet adoption outpacing digital literacy and legal protections. This creates a large pool of vulnerable users that organized crime syndicates exploit at scale, often operating out of unregulated facilities with limited law enforcement intervention.

_____________________

What This Means and What We Can Do

The dramatic increases in reports, particularly those involving generative AI, demonstrate that the threat landscape is evolving faster than protective measures. Here's what stakeholders can do:

Parents and Caregivers:

Delay children's access to social media platforms until age 16 and limit unsupervised technology use

Teach children to only communicate with verified friends—not strangers or unverified contacts

Be alert to requests to move conversations to different apps—this is a major warning sign

Watch for warning signs: withdrawal, secretiveness about device use, or unexplained gifts

Technology Companies:

Invest in detection systems that identify misuse of AI and grooming patterns across platforms

Implement age verification and private account defaults for young users

Provide enhanced parental controls

Policymakers:

Update laws to address emerging technologies and cross-border crimes

Fund law enforcement training and resources for investigating online crimes against children

Require meaningful safety standards for platforms popular with young users—Australia has banned social media for children under 16, and several European countries are considering similar bans or stricter parental controls

Educators and Communities:

Integrate digital literacy and online safety into K-12 curricula—national leaders like Common Sense Media provide vital resources, while local programs like Teen Esteem deliver engaging safety education in San Francisco East Bay classrooms

Create safe communication and reporting channels for young people

_____________________

Looking Forward

The data is clear: online child exploitation is accelerating. With 20.5 million reports in 2024, a 77% increase in online enticement, and a 6,344% surge in AI-related exploitation, the threat landscape is evolving faster than protective measures can keep pace.

The concentration of reports on social media platforms (68%), the global nature of the crisis (84% outside the U.S.), and the rapid adoption of new technologies by predators demand urgent action from technology companies, law enforcement, policymakers, and communities.

Every report represents a child at risk. Protecting the next generation online requires immediate, coordinated action.